It takes hours to execute terraform and ansible jobs. What if I want Ansible jobs to start immediately after my infrastructure gets provisioned through Terraform jobs? How would one hand over the monitoring of running jobs to other human resources? How would one get notified if a job fails? IT-Conductor Git/Terraform/Ansible integration to promote Application Infrastructure as Code is now GA per our Q1-2020 Features Announcement.

A normal workflow for a firm that uses Terraform/Ansible would include the following manual activities:

- Setting up a jump box machine with Terraform/Ansible.

- Pulling the terraform/ansible scripts to jump box from the repository.

- Executing the terraform jobs in the terminal for provisioning and waiting for it to be completed by manual monitoring.

- Executing the ansible jobs in the terminal for installing stuff after the completion of infrastructure provisioning and manually monitoring the jobs to complete.

IT-Conductor has taken these pain points into consideration and can configure multiple on-premise gateways that enable us to interact with the local environment. So we don’t need a jump box with pre-configured terraform/ansible. They are configured with portable versions on the fly through a gateway before running jobs. If the machine with one gateway were to fail, we can always transfer the jobs to another gateway.

We’ve also included the concept of process definition where multiple activities can be woven together so that they get processed serially. Activities can be anything like SQL script, shell script, Terraform script, or Ansible script.

Each activity has a self-refreshing web-based log. The Ansible job collects each playbook task containing its own log, with the help of remote backend features. It’s just a matter of sharing the URL of a running job if one wants to hand over the monitoring to another resource. And that’s not required because we encourage automation to manual monitoring, letting IT-Conductor notify you through mail/SMS if the job fails or completes.

Using IT-Conductor as an Automation Engine

In this section, we discuss how we can get the scripts from the git repository, make terraform/ansible jobs out of the scripts, weave them together through process definition, and finally automate it for provisioning/installation of SAP/HANA.

Ansible Scripts Integration with IT-Conductor from GitHub

-

IT-Conductor/automation-ansible Private Repository

https://github.com/IT-Conductor/automation-ansible

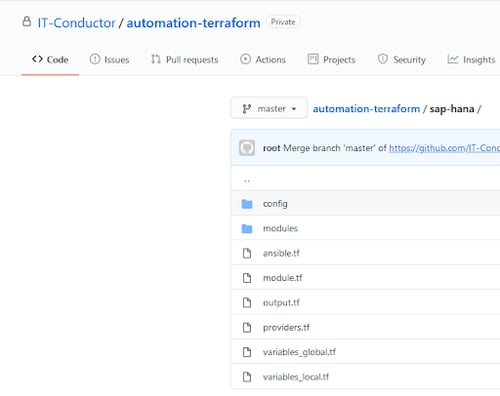

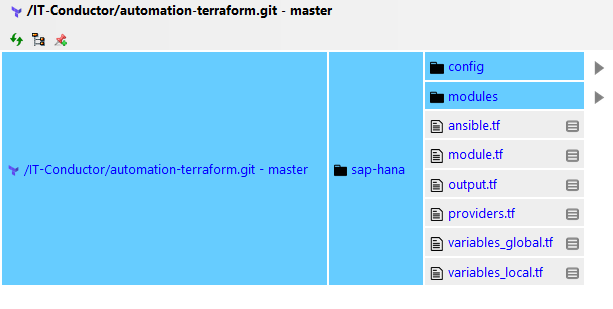

- IT-Conductor/automation-terraform Private Repository

https://github.com/IT-Conductor/automation-terraform

Figure 1a: Terraform Automation Scripts

IT-Conductor collects the GitHub files and maps them as file objects.

Figure 1b: Terraform Automation Scripts

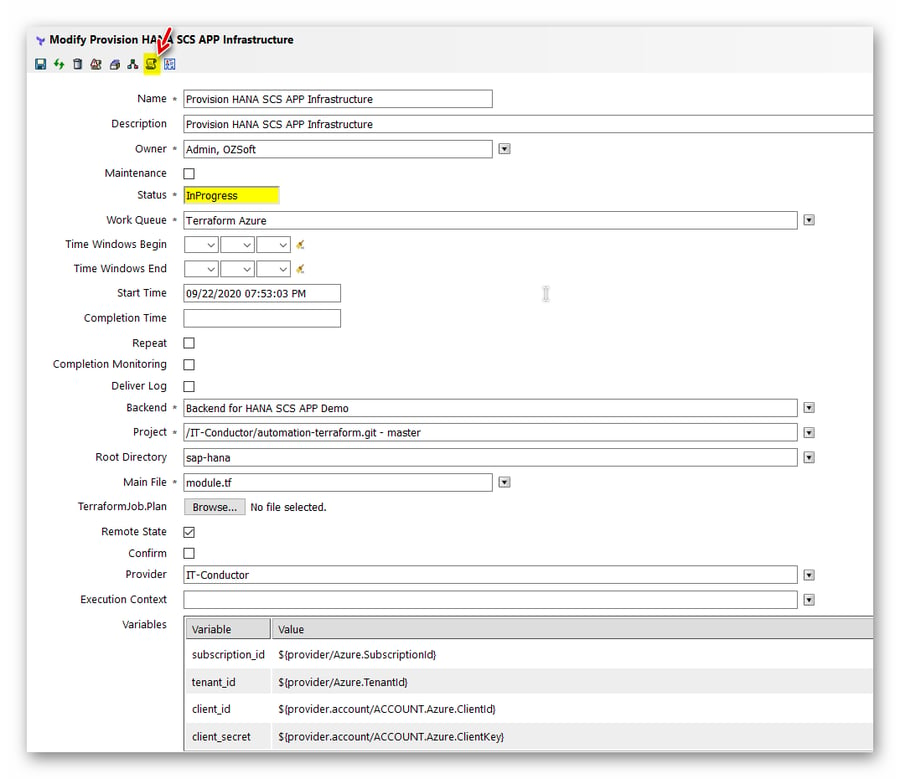

Making a Terraform Job

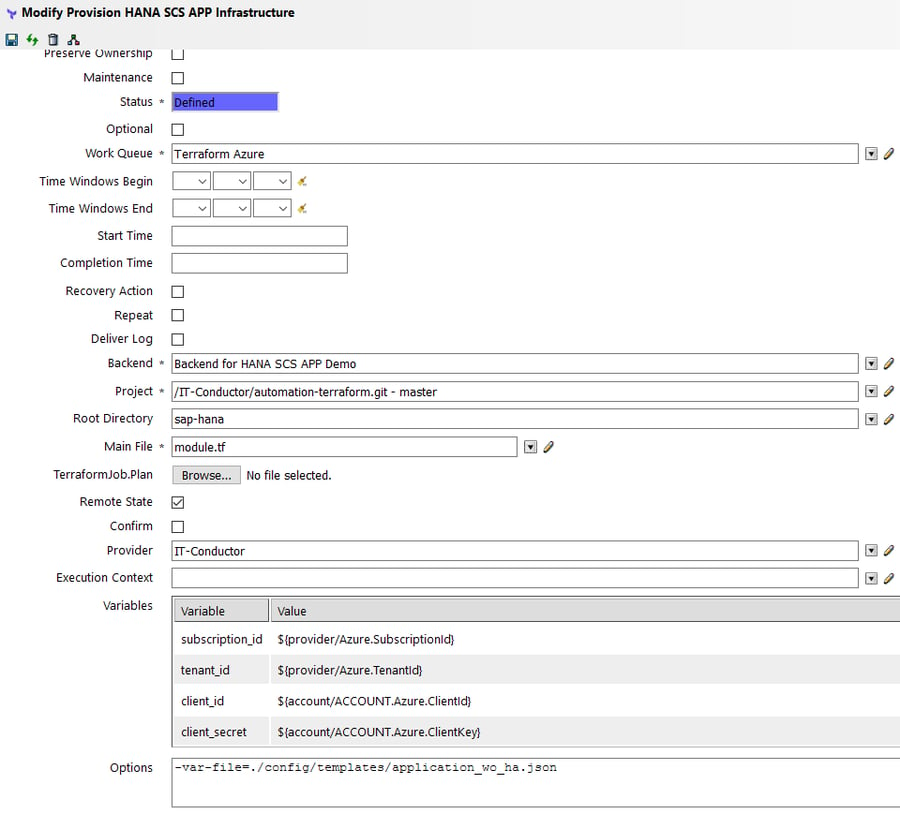

For making a Terraform job, we need the git repo project as shown in the previous section, the Terraform backend object to capture Terraform state file information, the provider containing Azure subscription/tenant information, and variables input to the Terraform script.

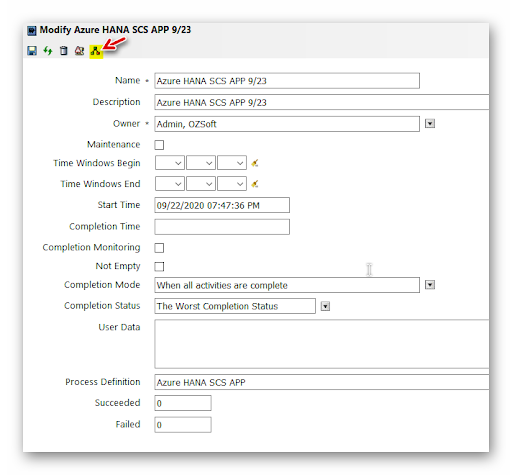

Figure 2: Modifying Terraform Job in IT-Conductor

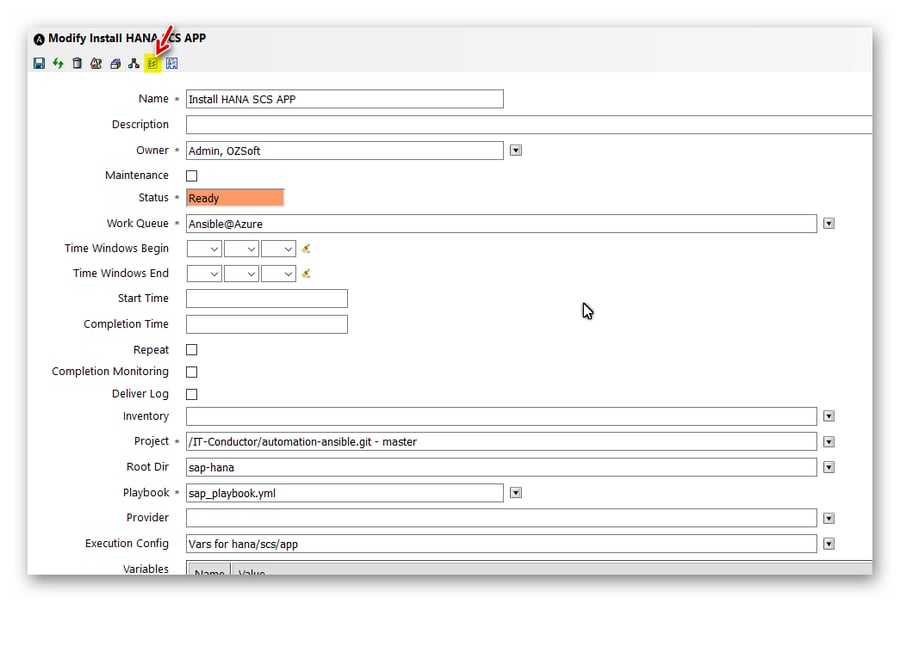

Making an Ansible Job

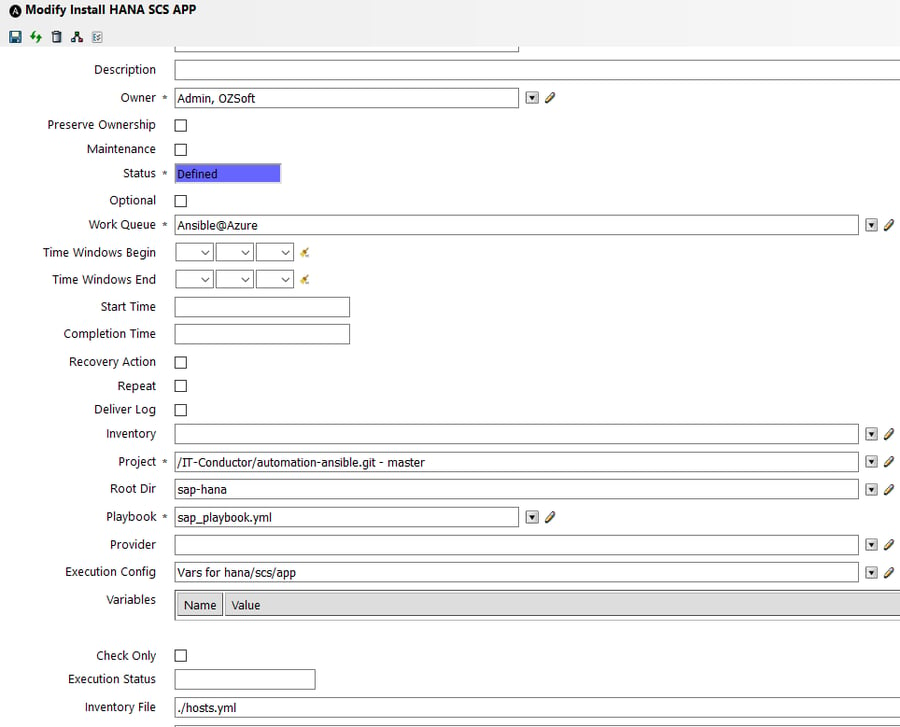

Similar to Terraform Jobs, ansible jobs also need a git repo project along with an execution config object containing variable data for the installation process and inventory object or location of the inventory file.

Figure 3: Modifying Ansible Job in IT-Conductor

Weaving Terraform and Ansible Jobs together through Process Definition

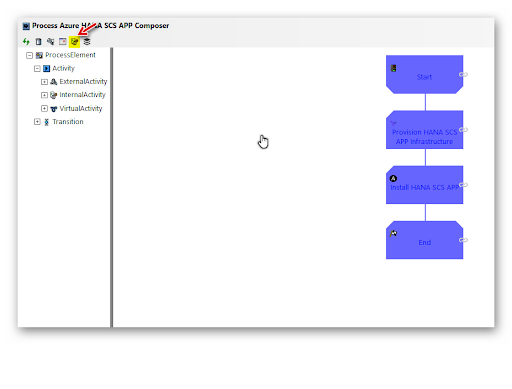

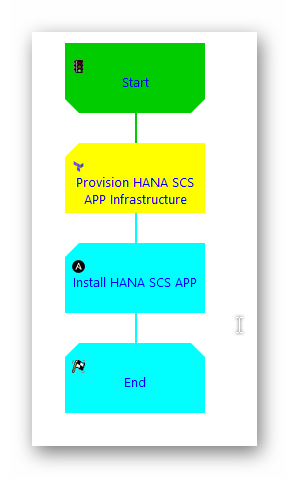

IT-Conductor has the framework for automating different activities by weaving them together so that they can run serially. Process Definition is a template for processes that can be scheduled to run at the desired time.

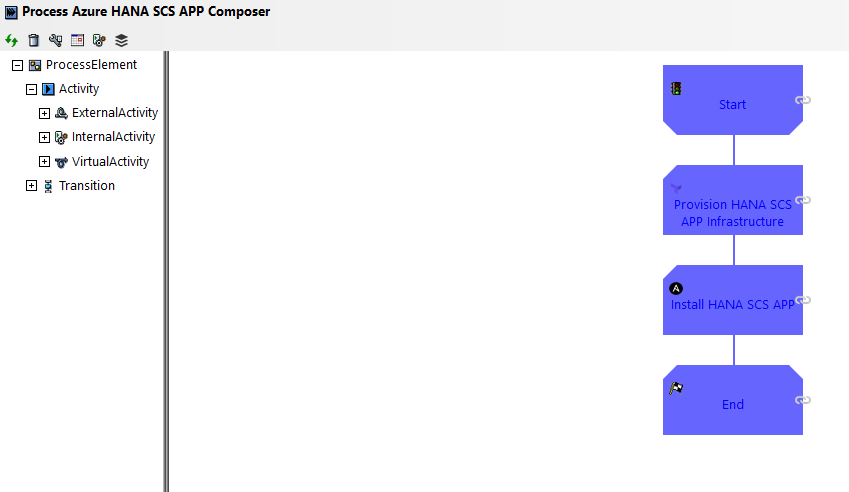

Figure 4a: Process Composer

In the picture above the Process Definition template signifies that Terraform Activity “Provision HANA SCS APP Infrastructure” provisions the required infrastructure; when it completes the Ansible Activity “Install HANA SCS APP” starts immediately for installing the configured SAP packages.

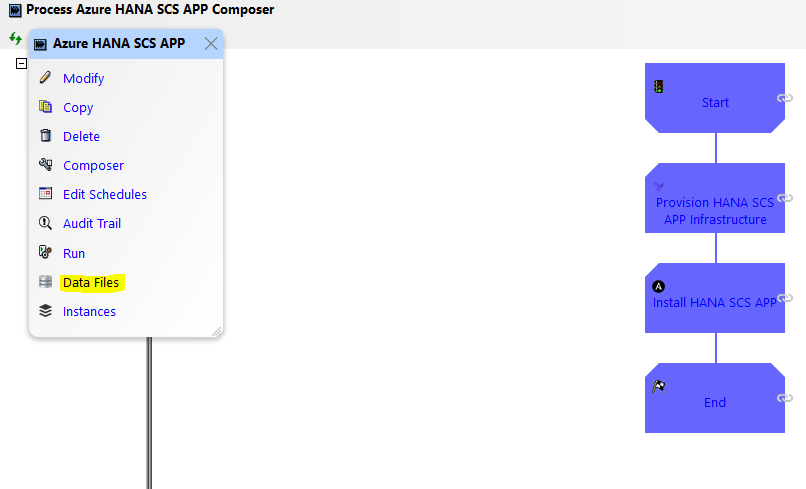

The terraform/ansible job can be fed input files and can generate output files. In the above flow, when the terraform job completes it generates output files as inventory, which are needed as an input file to the subsequent ansible job. The Process Definition supports this through the implementation of the ‘Data Files’ feature.

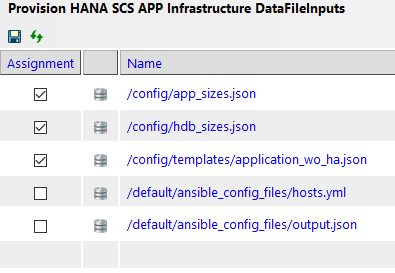

In Process Definition, one can add multiple data files that can be read/written by its activities.

Figure 4b: Data Files Option in Process Composer

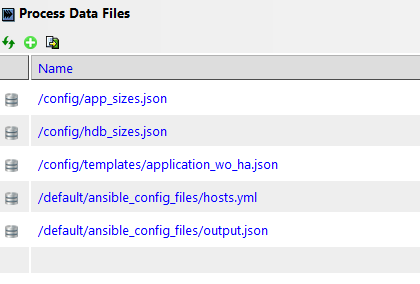

Figure 5: List of Process Data Files

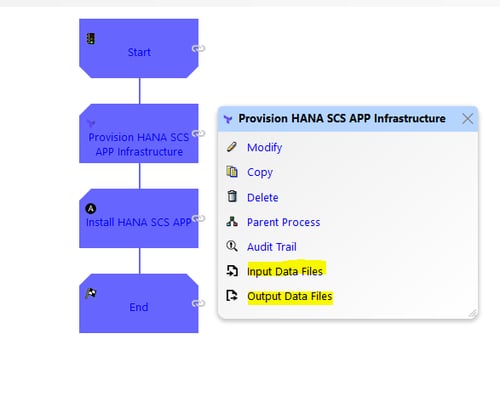

In each activity, these data files are available for selection as input or output data files.

Figure 6: Input and Output Data Files

Figure 7: List of Input Data Files

Running the Jobs in Process

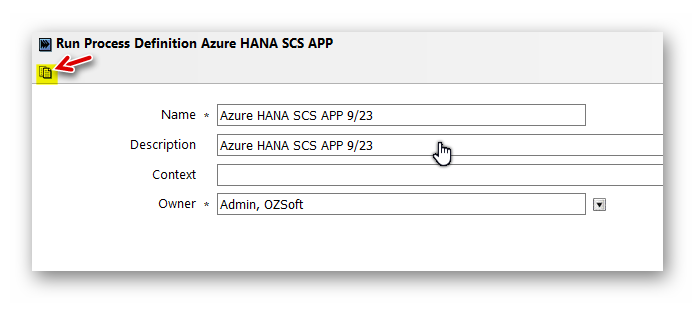

Along with scheduling the Process Definition, one can manually run it anytime by clicking Run/Instantiate.

Figure 8a: Run/Instantiate Process Definition

One can simply name the process and then run it.

Figure 8b: Run/Instantiate Wizard

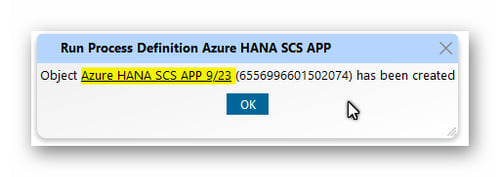

Then click the object link when the pop-up window appears.

Figure 9: Run Process Definition Pop-up Window

From there one can go to the Process Flowchart:

Figure 10: Navigate to Process Flowchart

And view job status:

Figure 11: Job Status as shown in Process Definition

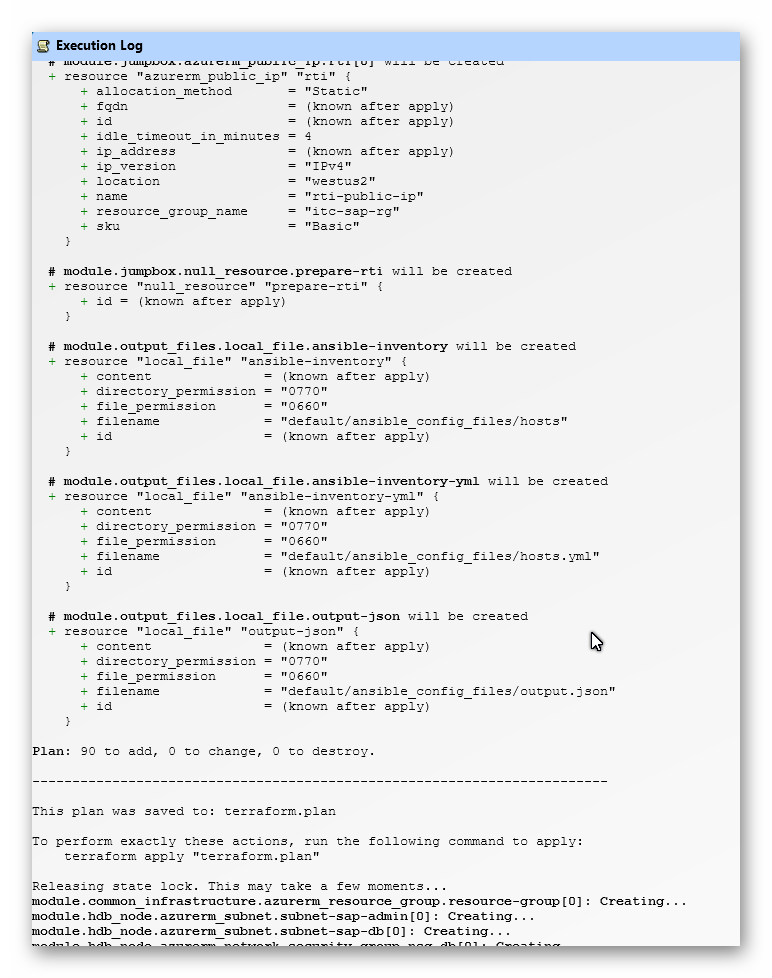

To look into each job's progress, click on a particular job and then the execution log.

Figure 12a: View Execution Log

Figure 12b: Execution Log

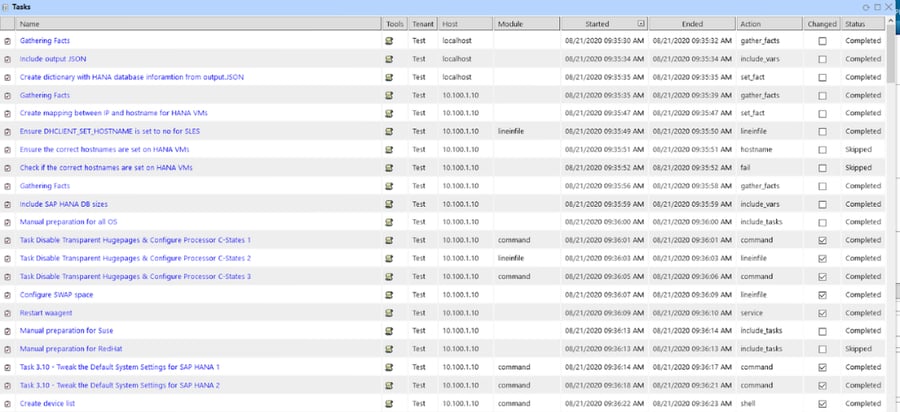

One can also view individual Ansible tasks.

Figure 13a: View Ansible Tasks

Figure 13b: Ansible Tasks as Viewed from IT-Conductor

Overall, there are several advantages to using IT-Conductor as an automation server:

- IT-Conductor utilizes on-premises gateways as jump servers alleviating the need to log in remotely

- IT-Conductor layer of security is enforced to ensure the role-based access and attribution of executed activities

- IT-Conductor process graphical design, orchestration, and scheduling capabilities are fully integrated

- IT-Conductor data that is already configured/discovered, including host, application, and cloud credentials and staged git repositories are seamlessly integrated into Ansible

- Dynamic Inventories based on dynamic IT-Conductor systems and application groups

- Ansible task log recording is available through IT-Conductor native UI/automated delivery over email