Users call and escalate about how bad their performance is and the team scrambles to troubleshoot for the root cause, but the subject matter experts are showing their various charts from their various tools on the incident call. The incident manager circles the room to prompt the Application team to the Basis team, to the DBA team, to the OS team, to the Storage team, and finally to the Network team, trying to see if someone knows the cause of the issues. Some think they found the culprit because their data has some spikes, but they are not quite sure because they don't know what else was happening on the system from other infrastructure or application components.

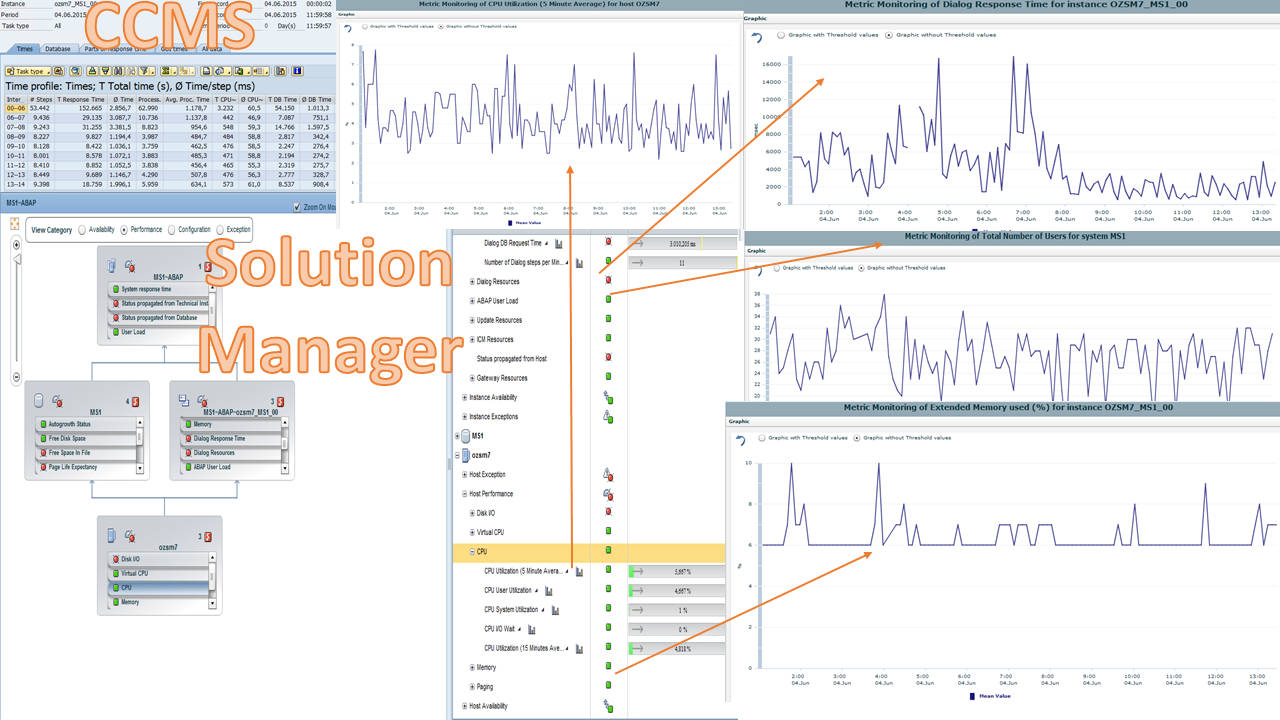

It may look something like these from CCMS and Solution Manager, which are just plain hard to analyze because they aren't aligned. The teams are most likely not able to agree with confidence on the root cause.

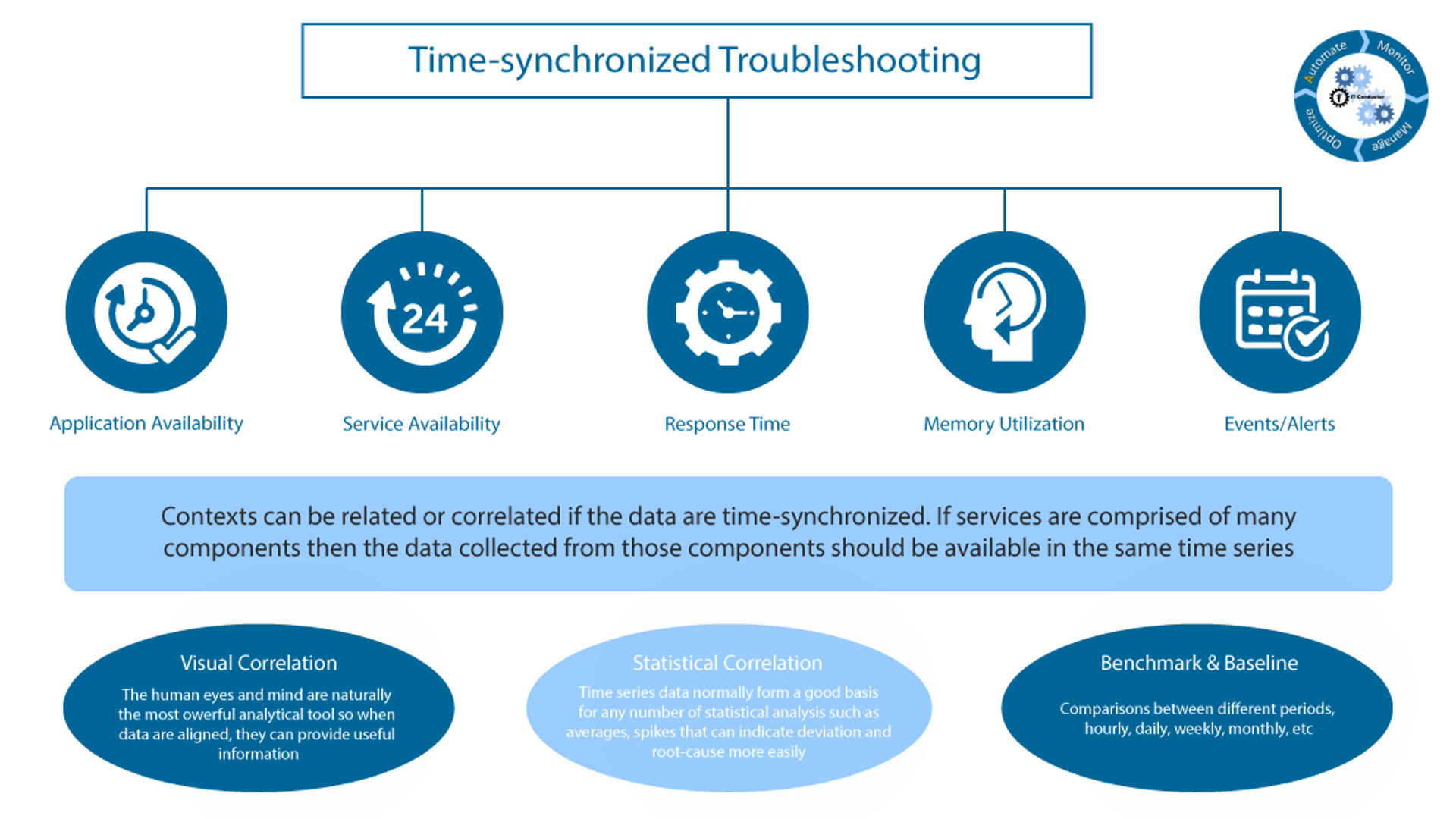

Time-Synchronized Troubleshooting

Effective and efficient troubleshooting requires contexts that are aligned where data including alerts and metrics are time-synchronized. If services are comprised of many technology components, then the data collected from those components should be available in the same time series to enable:

-

Visual Correlation: the human eyes and mind are naturally the most powerful analytical tools so when data are aligned, they can provide useful information

-

Statistical Correlation: time series data normally form a good basis for any number of statistical analyses such as averages, and spikes that can indicate deviation and root cause more easily

-

Benchmark & Baselines: comparisons between different periods, hourly, daily, weekly, monthly, etc.

Let's Simplify IT

Here's an easy troubleshooting overview chart that includes the important KPI in one view, with options to drill in or add further troubleshooting context on the same chart, or compare different periods, and from a service monitoring standpoint.

All synchronized! Want to see more?